Neural processes11/11/2023

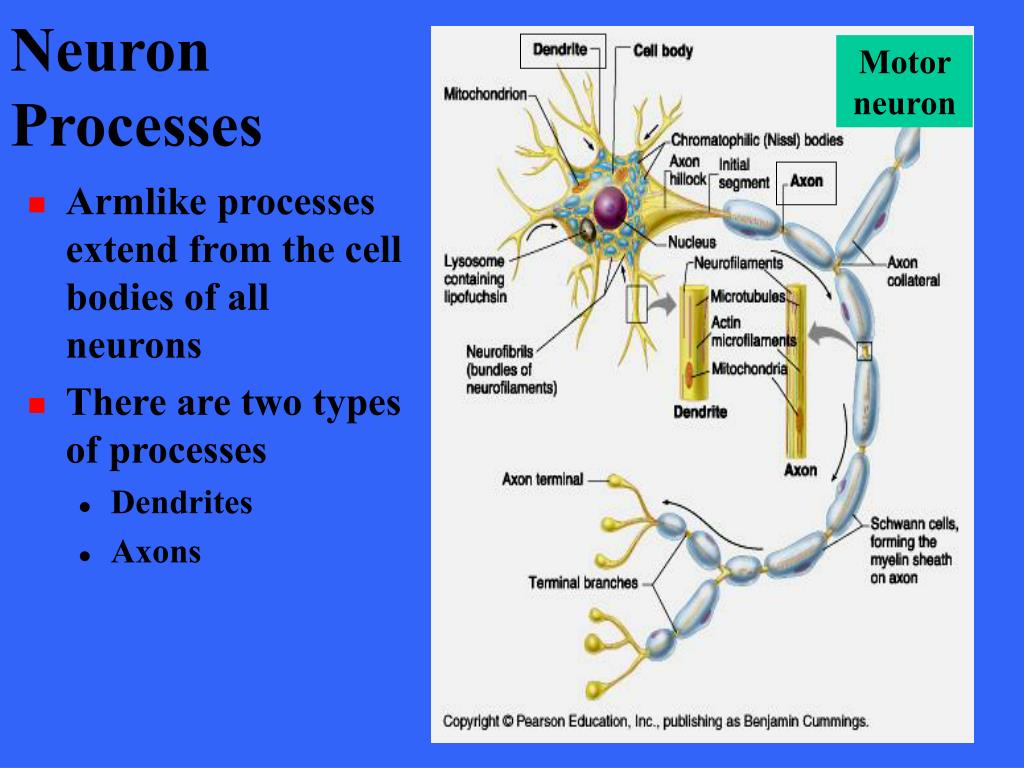

Lets break down what one single node might. Extensive experiments conducted on both synthetic and real-world data demonstrate the effectiveness of our proposed model in various few-shot settings. The Neural Process Family (NPF) is a collection of models (called neural processes (NPs)) that tackles both of these issues, by meta-learning a distribution. This process of passing data from one layer to the next layer defines this neural network as a feedforward network. Theoretical analysis on the proposed ECNP establishes the relationship with CNP while offering deeper insights on the roles of the evidential parameters. Across two independent experiments, here we directly compare neural processes for the controlled versus automatic retrieval of semantic and episodic memory. The evidential hierarchical structure also leads to a theoretically justified robustness over noisy training tasks. We propose Evidential Conditional Neural Processes (ECNP), which replace the standard Gaussian distribution used by CNP with a much richer hierarchical Bayesian structure through evidential learning to achieve epistemic-aleatoric uncertainty decomposition. They lack a systematic fine-grained quantification on the distinct sources of uncertainty that are essential for model training and decision-making under the few-shot setting.

Proceedings of the 35th International Conference on Machine Learning, PMLR 80:1704-1713, 2018.

However, the current CNP models only capture the overall uncertainty for the prediction made on a target data point. Conditional Neural Processes Marta Garnelo, Dan Rosenbaum, Christopher Maddison, Tiago Ramalho, David Saxton, Murray Shanahan, Yee Whye Teh, Danilo Rezende, S. At the same time, we demonstrate that NDPs scale up to challenging high-dimensional time-series with unknown latent dynamics such as rotating MNIST digits.Download a PDF of the paper titled Evidential Conditional Neural Processes, by Deep Shankar Pandey and Qi Yu Download PDF Abstract:The Conditional Neural Process (CNP) family of models offer a promising direction to tackle few-shot problems by achieving better scalability and competitive predictive performance. By maintaining an adaptive data-dependent distribution over the underlying ODE, we show that our model can successfully capture the dynamics of low-dimensional systems from just a few data points.

To address these problems, we introduce Neural ODE Processes (NDPs), a new class of stochastic processes determined by a distribution over Neural ODEs. One core process that is arguably shared across the two memory domains is cognitive control. In contrast, Neural Processes (NPs) are a family of models providing uncertainty estimation and fast data adaptation but lack an explicit treatment of the flow of time. NPs have the benefit of fitting observed data efficiently with linear complexity in the number of context input-output pairs. Each function models the distribution of the output given an input, conditioned on the context. Second, time series are often composed of a sparse set of measurements that could be explained by many possible underlying dynamics. Neural Processes (NPs) (Garnelo et al 2018a b) approach regression by learning to map a context set of observed input-output pairs to a distribution over regression functions. Conditional Neural Processes (CNPs) bridge neural net- works with probabilistic inference to approximate func- tions of Stochastic Processes under meta. First, they are unable to adapt to incoming data points, a fundamental requirement for real-time applications imposed by the natural direction of time. However, despite their apparent suitability for dynamics-governed time-series, NODEs present a few disadvantages. Download a PDF of the paper titled Neural ODE Processes, by Alexander Norcliffe and 4 other authors Download PDF Abstract:Neural Ordinary Differential Equations (NODEs) use a neural network to model the instantaneous rate of change in the state of a system.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed